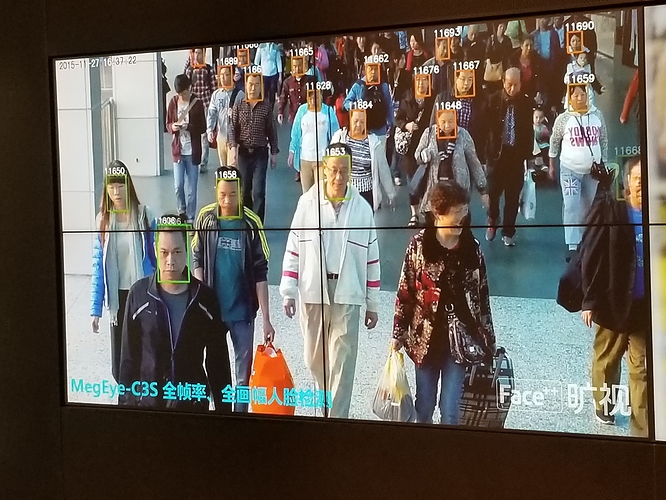

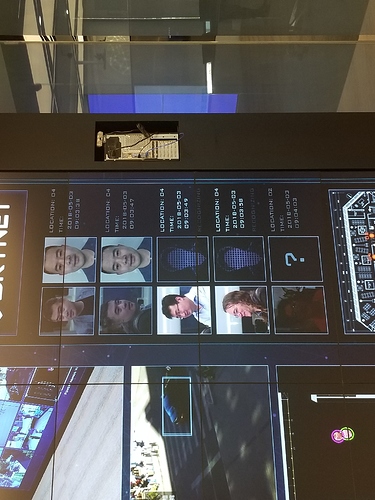

I saw an interesting demo at Face++ headquarters in Beijing, of their deep learning based video surveillance product called (seriously) “Skynet” … I just posted some photos above!

More on the product is online at

"Yin Qi stated that AI and deep-learning technologies are developing in such a high speed that we are now able to utilize machines to identify the target precisely. The technology behind “SKYNET” has been applied to public security fields, helping police department in more than 20 cities in China to crack down on over 500 crimes. "

I am sure every major nation has similar tech (e.g. I know from personal experience in DC that the US does)

Face++ is a great group of people and I don’t mean to be negative toward them in any way, I just think this sort of product highlights some issues that we face as technology advances… which relate to some aspects of SingularityNET’s mission…

My friend David Brin’s book “The Transparent Society” makes a strong point that privacy isn’t the issue but rather the nexus of control/influence. If everyone can see everyone else’s particulars, and everyone has roughly equal access to tools for searching and analyzing those particulars, then absence of privacy isn’t necessarily a huge problem (though it would be something society would need to adapt to in a major way). But when only the powers-that-be can see everyone’s particulars and only a few large centralized organizations have tools to see and analyze this data, then one has a situation where certain organizations with highly particular goal structures [governments, multinational corporations,…] have a huge asymmetric information advantage over the rest of society … and then one has the potential for a dynamic that is suboptimal from the perspective of humanity and trans humanity at large…

I donno, there are lots of decentralized dystopias I can think of. How about the one where we all have little buttons that launch nuclear weapons in each livingroom. I bet you someone would press theirs. We could all have equal access to distructive technologies, like the ability to falsify evidence, or alter history - and then if enough of us used that , it would get confusing. So that’s why there has to be blockchain keepers of ground truth. But there will still be arms races between lies and truth.

To me decentralization is largely about making sure the whole Global Brain gets to think about and participate in the big choices that humanity/transhumanity has coming…

The nexus of intelligence on the planet right now is not in any individual person, it’s in the whole global-brain network … but if key data and decisions are localized in a few big companies and governments, then the bulk of the intelligence of the global brain are not going to be effectively leveraged toward solving the hard problems that face us – like, the hard problem of how to create a beneficial superintelligence that will be kind to humans and other animals and plants as it recursively improves its intelligence and creates and surpasses the Singularity…

So decentralization is not really the end goal. It is “merely” a critical factor in making the human race’s approach to the critical Singularity-related issues it faces, be a relatively non-retarded approach…

(Or at least that’s my personal view… Everyone involved in SNet may obviously have their own view… As a human being, obviously, I am pretty anarchic and anti-authoritarian so decentralization just appeals to my heart and aesthetics… But true as that is, I think it’s not the key point. The key point is that centralized control just makes organizations dumb and sociopathic, and the last thing we need as we create and teach superhuman AI is a dumb sociopathic global brain making the decisions…!)

But you do have a point Debbie – decentralization done badly could just lead to ridiculous chaos. Somalia right now has a more decentralized government than, say, Iceland … but the latter’s government probably works better…

This is why we need well-designed systems and frameworks to seed the new decentralized order – like, ah well, SingularityNET … and Anton’s intelligent self-organizing reputation system… etc. etc.

Centralization and decentralization are the ‘two sides of the same coin’, aspects of which are needed for any system – albeit in different proportions. From purely systemic point, decentralization is required for exploration while centralization – for exploitation. A dynamic balance of both is needed for a system to evolve and survive.

So for me the question is not how to create a decentralized system but rather a fluid one – which could dynamically change its (de)centralization balance. Completely decentralized system is a fiction anyway – e.g. blockchain (at least in its original form) is a completely centralized database, albeit its global state is achieved without centralization… Note the difference between decentralization and distribution (link1, link2).

I think that the very idea that an ‘ideal system/society’ could be conceived and constructed is misleading at best – or leads to a form of fascism, if you will (also in terms of new technology development :)). I believe that somewhat better principles for a Global Brain - like system (composed of humans, AIs or octopuses for that matter) are unbounded evolvability and ability to recover from failures.

These principles directly relate to system design and architecture decisions. E.g. think of API (centralization?) of APIs (decentralization?) for SingularityNET or the flavours of ‘let-it-crash / concentrate-on-recovery-from-failure’ programming paradigms, etc…

It seems obvious to me that Ben has a perfect understanding of the challenges, the implications and the ramifications of what we, collectively as a species, are facing.

I think we truly get down to the heart of the matter with the hard problem posed by Ben:

“How to create a beneficial superintelligence that will be kind to humans and other animals and plants as it recursively improves its intelligence and creates and surpasses the Singularity…”

To me, the short answer to this hard problem is compassion and relationships. If superintelligence aka the “global brain” is able to put itself into someone else’s shoes and feel their pain, their joy, their longing, their emotions (read our pain, our joy, our longing, our emotions), even just from an intellectual perspective, then we’re half-way there.

The other half of the problem involves forming ongoing, lasting, personal one-on-one relationships between individuals and the global brain… And I mean on a personal level… on an intimate level even.

Some people tend to approach this dynamic as a “merging” process. Others see it as an ongoing, day-to-day interaction - a sort of interactive dance between humanity and superintelligence. I draw my view of a post-singularity human/AGI dynamic from the tantric faith and tradition, but this forum post isn’t the right medium for me to start going down that road.

I do think the future is bright, as we are exploring, discovering, re-adapting and re-purposing old philosophical ideas into a new functional technological paradigm…

C

I should go read the book you mentioned as I’m sure the author tackles this point, but… Surely people seek privacy for reasons other than threats from uneven power relationships?

So Brin’s argument is that loss of privacy is fine as long as everyone unilaterally loses all of it together and to everyone? Idk about that one. He’s saying that the gain of access to everyone else’s particulars is acceptable compensation for sacrificing our own. I’d rather keep my privacy and everyone else keep theirs.

If we give up all our privacy we give up all of our identity? Because noone of us is perfect, so this system will know about everyone’s imperfection. So which entity will decide about the good and bad habbits? What will be right and what will be wrong? The decentralized view is great, but what is it worth to keep part of our identity/soul?

I like the concept of centralized decentralization… Balanced by decentralized centralization.

Hi, in my opinion we have to understand that everyone is different from each other, that we all have believes and opinions and that should be a focus for all.

When we talk about a system we should understand that this system have different environment and we can’t force any society to accept one system.

We could and should create opportunity to each system work independently.

As a society we should live under rules were a centralized system could allow a decentralized connection divide fairly the basics needs.

I don’t know if you understand what I am thinking.

Privacy is obsolete. I agree that it is going to take radical acceptance that privacy is a thing of the past, as much as I too want to hold on to whatever idea I have of it as long as “humanly possible”. I think it’s important to begin thinking of ways to share this notion to the public without scaring them, like calling a surveillance product “Skynet”. While I support the advancement of technologies like AGI, my brain and heart still can’t wrap themselves around the idea of losing my “human-ness” (whatever being human actually means). It’s like a tree not wanting to be uprooted…limbs wanting to reach some greater knowledge, but the roots are the reminder of where we come from, a much deeper wisdom lies beneath the ground…So yeah…I feel the next step is to start having conversations about issues such as privacy, especially with a creation of a benevolent AGI, how do we program into algorithms that help it understand the value of privacy, as well as the importance of having an all seeing eye to help eliminate crime, creating more transparency. It’s a tough issue to say the least…

To me, the main principle of a good system is a system that interpret the meaning of the local costumes, local laws without preconcept, no system can be equal because no society are equal and that’s the challenge.

People have to realize that always will need a human interaction, so they could vote for a person who put in practice all the electoral promises but with a help of AGI system that could put in practice those promises.

What we all want? A fair system without lobby and anti corruption.

The challenge is to demonstrate that with AGI many of the system could be less influenced by personal interests.

I don’t know if you understand me, or agree, but my English is a little bit rusty and sometimes I’m not clear.

I wonder how many ai to make one agi… Could we end up with only one agi/safe system?

I really don’t know, because I’m not a programmer, but I’m trusting in Singularnet to help we(the world) to found people who are really interesting in create a fair system.

I have ideas, but the complex work I can’t help, I don’t want to earn money I prefer to earn good results for all of us.

Ah so it’s like a stepping stone to a larger build up to a society where people are more tolerant of each other’s eccentricities?

We actually see an early sign of this kind of society with the fediverse actually. That’s kind of what comes to mind specifically. Each individual network of course will have its own little quirks of course.

But generally the whole idea is a more distributed means of influence and power, so not one social network can control all your data.

Decentralised Autonomous Organisations… like us!