How do we use the auditory signals generated by the ear to recognize events in the world, such as running water, a crackling fire, music, or conversation between speakers? In this unit, you will learn about the structure and function of the auditory system, the nature of sound, and how it can be used to recognize sound textures and speech. Physiologically inspired models of these processes capture the neural mechanisms by which the brain processes this information.

Part 1 of Josh McDermott’s lecture provides an overview of the auditory system and neural encoding of sound, and explores the problem of recognizing sound textures. A model of this process exploits statistical properties of the frequency content of an incoming sound.

Part 2 of Josh McDermott’s lecture delves more deeply into the ability of human listeners to recognize sound textures, using the synthesis of artificial sounds as a powerful tool to test a model of this process. The lecture also examines other cues used to analyze the content of a scene from the sounds that it produces.

Nancy Kanwisher presents fMRI studies that led to the discovery of regions of auditory cortex that are specialized for the analysis of particular classes of sound such as music and speech.

Hynek Hermansky addresses the problem of speech processing, beginning with the structure of speech signals, how they are generated by a speaker, how speech is initially processed in the brain, and key aspects of auditory perception. The lecture then reviews the history of speech recognition in machines.

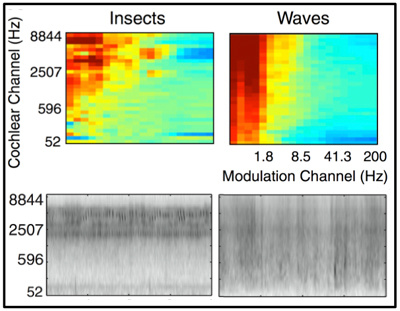

How do we recognize the source of a sound texture, such as the sound of insects or waves? Josh McDermott and colleagues propose a model of this process that uses statistics of the frequency content of the sounds, and the modulation of this content over time, depicted in these spectrograms (bottom) and plots of modulation power (top).

(Courtesy of Elsevier, Inc., http://www.sciencedirect.com. Used with permission. Source: McDermott, Josh H., and Eero P. Simoncelli. “Sound texture perception via statistics of the auditory periphery: evidence from sound synthesis.” Neuron 71, no. 5 (2011): 926-940.)

Part 2 of Hynek Hermansky’s lecture examines the key challenge of recognizing speech in a way that is invariant to large speaker variations and unwanted noise in the auditory signal, and how insights from human audition can inform models of speech processing.

A panel of experts in vision and hearing reflect on the similarities and differences between these two modalities, and how exploitation of the synergies between the two may accelerate the pace of research on the study of vision and audition in brains and machines.

Unit Activities

Useful Background

- Introductions to neuroscience and statistics

- The lecture by Hynek Hermansky lecture requires background in signal processing