In the video below, I walk you through the basics of my AGI architecture I’ve been working on for 5 years. Before you reach the video, there is text to solidify key concepts so that understanding it all can be easier. The design is meant to be very very simple and beautifully explain a lot of how natural thinking occurs to solve big life problems. Below is how my text predictor code works (100MB compresses to approx. 21.8MB, world best is 14.8MB), you will learn some fundamental things all good text predictors are doing using “frequency”. Frequency is also used for discovering the word cat=dog because the shared context must appear twice at least to see they both ex. eat. Note that that compression is for evaluation and is different than compressing a network to learn a better model.

My algorithm has a 17 letter long window step along the input file 1 letter (byte) at a time, updating a tree as it sees new data. The tree’s branches are 17 nodes long because it adds a window to tree (after it finishes its search process described next), and updates node counts if passes any node. For each step the window takes, the algorithm searches the tree for 17 different searches each a letter longer. The children leafs (the final letter of a searched branch) are the predictions with counts seen so far in the file. Layer 1 nodes are children too and need no match. The tree is storing the frequency of all 1/2/3…/17 letters seen so far. The children are what allows you to predict/compress the next letter accurately. These 17 sets of predictions must be mixed because while the longest set is more accurate - we have less statistics, sometimes only 2 counts. We start with the longest found. Ex. 14 letter match in the tree. The 14th set of predictions may say it seen come next a=44, b=33, f=25, w=7. I sum a set’s counts up to get a total of (in this case) 109, then I divide each count by the total to get %s that all add up to 1% ex. 0.404% 0.35%… Now for all these predicted %s, we still have 13 sets to mix and must remove some % from them each. So what I do is I check the total counts of the set against a Wanted Roof ex. 109<>300 (maybe we don’t even need to mix lower sets if we got enough stats), and so I cut each % of each prediction by about 1/3rd then in this case. And in this case we still desire 66% more stats. For the next set, if say we have 200<>300, I take away 2/3rds from the 66% - meaning we still desire 22%, not 66% - 2/3rds = 0%! I take away the % got OF the % still desired. A little bit of lower sets always leak in therefore, which is better because we can never be sure even if surpass Roof by lots. Besides, it gave better results. But Roof is decided by how many predicted symbols are in the set (total unique symbols being predicted), so if i have 2 then Roof may be 8 counts wanted. Also, while the Roof is based on how many different symbols are seen in the set, we get a slightly different Roof if we are on the ex. 5th set, i.e. if we have 4 letters in the set #14 then Roof is ex. 33, but if it is set #5 then Roof is ex. 26. Also, based on the Roof’s size, a curve’s bend is modified. This Activation Function curve/threshold gives small/large total counts in a set an even smaller/larger total (but it isn’t used in the Arithmetic Coding, it’s only used for deciding how much % this set gets in our mixer). This is meant to be a exponential activation. Finally a global weight is given to each set ex. the 14th set is always given 0.7% of the weight it was going to get lol. I hardcoded the numbers for now but the code isn’t grossly large of course. If they were adaptive and were based on the data then the compression would be even better. I just noticed I do exit the mixing before reach lower sets if the Roof is ever surpassed, I’ll have to test if this is useful. The Arithmetic Coder takes the combined sets i.e. the prediction %s are combined a, b, c + a, b, c + a, b, c … = a, b, c (softmaxed so all the predictions add up to 1% i.e. a, b, c = 1%), and the AC then takes a high and low bound 1-0 and takes the middle between the high and low, and starts misusing each % of the set, until matches the final letter in the window (same process whether compress or decompress). So say we stop once reach b in our set ex. a, b, c, we are in the float precision now of ex. 0.45-0.22. WE take middle again (0.23) and start misusing (once the window on the file takes another step. The encoding decimal keeps getting more precise, storing the whole file. To work in 16 byte float we need to carry away locked digits, meaning if the high and low are both now 0.457594-0.458988, we store ‘45’ and get now 0.7594-0.8988, and we are going to be taking the middle of these 2 to make the decimal more precise then. This long decimal is then stored as a binary bin number ex. 6456453634636=10100011100111010011. I didn’t implement the window to store the last few letter as branches i.e. the 17 letter window adds itself to tree but before predicting next it could add the 16, 15, 14, etc as shorter branches which would help just a ‘bit’ more. I didn’t implement the removing same counts from lower sets that are just from the higher set, because it hurt compression, i.e. if there is 9 counts total in set 3 and 99 total in set 2, 9 of the counts in set 2 are the same observations and ‘should’ not help us reach Roof. I’ll look into it more. Lastly, escape letters, my first set we mix is a dummy set that has super small weight and has every possible letter, in case we need to encode/decode one and hasn’t yet seen it in the file, hence requires a small room in the AC high low bounds. I also hardcoded each probability in this dummy set, common letters get more weight. Compression/decompression takes 2 hours and 16 minutes for 10MB, but Python is slower. Ram is fairly big because I didn’t implement the pruning. My algorithm handles incomplete/noisy information (uncertainty) unsupervised Online hence the mixing of window models. Better net or net compression and/or file compression and insight extraction (not decompression of FILE !), faster code and less RAM Working Memory used, all lead us closer to AGI, and smaller code does (a bit).

My code is in Python but for now I’m linking Shelwien’s Green in C++, it’s very similar. Simple bytewise context mixing demo

Before we get to the video, now here’s a key point breakdown to help solidify some main parts of the idea, it’s ok if a few things go over your head, just hold on to my points:

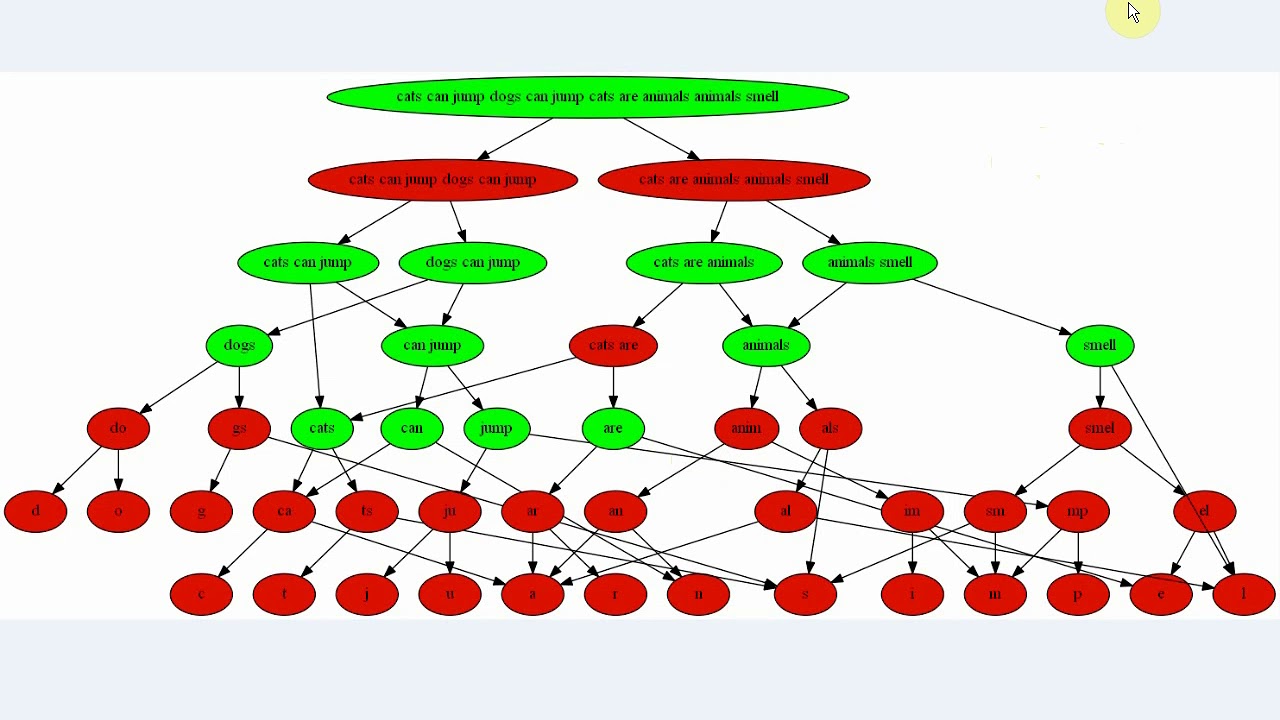

As shown in the video showing the big real main design, the brain is a collection of mini hierarchies, re-using shared feature nodes linked as contexts.

My working code uses a trie/tree and each letter and phrase seen up to now has frequencies stored based on how many times it (a node) was accessed. Larger phrases have fewer appearances. These are strengthened connections/ weights in the hierarchies!! They decide how much energy goes to a parent (how open the channel is). Multiple nodes in my code get activated and each has multiple parents that get activated too, and some are shared by other recognized nodes ex. ‘the a’ and ‘he a’ and ‘e a’ and ’ a’.

You can see as well if the video’s hierarchy design did recognize multiple similar nodes too (translation) by ex. ‘dog’ being activated and triggering linked contexts that ‘cat’ shares as well, they would as well share similar prediction candidates. All this works on its own. No backprop! > We increment node frequencies based on node accesses, and related nodes cat/dog are discovered/activated based on shared context leaking energy from cat to dog nodes on its own.

The prediction candidates that entail the ends also retain energy from prior activation, more if more recently, large proof is below BTW, and lesser if was a similar node that activated it. That’s Temporary Energy, it can look at all the last 1,000 words!

Permanent Energy is always active memory, Rewards, look at Facebook’s Blender chatbot (video below) that uses a Dialog Persona to make it talk about its agenda! It can have multiple goal nodes.

My design allows goal node updating by leaking reward to similar nodes ex. food=money or food=dinner, and now I will start predicting/ talking the next words about money now. Root goals are not as changeable, the artificial rewards are sub-goals.

The energy in the net defines itself over time, energy from multiple activated nodes leaks to a single candidate prediction (top k predictions, usually the most probable one, especially if very high probability than other candidates). My code stores Online the predicted Next Letter right now and that is what generates new discoveries that are somewhat true, depending on how confident the prediction probability is.

A good brain exploits and uses the likeliest nodes as prediction, not random data collection. But in dreams you can see we do not generate/ talk about our desired/probable nodes, but random ones, especially activated ones from the last day. It wants you to explore and generate more randomly by not using Permanent Rewarded goal nodes, and look around the last day’s experiences to search for a while for discoveries.

The goal is to see how to predict/get to the desired outcome… It needs a lot of data to be sure it “made it” and isn’t simply being told “aliens arrived, u can stop working no artificial organs now”. It has to search sometimes for a while, and go through many sub goals, until it fits with the data… This part massively confuses me, how it actually knows how it reached the answer/ implemented in real life or has a solid discovery… Or I mean how it knows which sub goals to make and which get met… Pretty much we want it to make many desired discoveries, and listen to us if needs more data (either time to implement its idea or IOW feed it new data…to get new sub goal question rewards).

Video:

I should have also included in the video that Summarization, Translation, and Elaboration would be controlled by how much energy is allowed - you only say important features when you Summarize, not frequent or unrelated or unloved words.

I think one key difference in ANNs for text is the network doesn’t store nodes that can be displayed as solid letters and phrases as mine can, for example the lowest layer nodes a b and c may all point to the parent node ‘abc’, which has ex. a count of 5 times seen so far, but the ‘a’ that builds it has only 3 accesses seen so far. So instead of blended words or phrases, like ‘cotg’ made from cat/dog you might even get ‘cOtG’ where some children affect the node less. I’m unsure yet if that’s useful.

From testing GPT-2 and making my own algorithm last year, I have strong evidence that nodes retain energy and the frequency predictions are helped out by already existing energy sitting in related nodes. As you know, when you hear something, it remains on your mind for quite some time. The last 80 words read are all energized, stored in order but as chunks, and are not on paper anymore but in your brain! They need to remain active in your brain. The more activated a similar phrase node - the more activated its prediction parents will be. But word nodes may also leak energy to other similar word nodes as well. The energy sitting around definitely will add to the prediction energies therefore, see? If ‘leaf’ is activated 40 words ago, and our prediction predict letters from word nodes, the leaf and grass etc nodes will also be pre-activated some bit. These energies eventually fade off your mind exponentially.

We can see Facebook’s Blender uses also Permanent energies using a “Dialog” as they call it, making it *always talk/ask as if it has an agenda for being a communist. These nodes are hard reward coded from birth and *should update other related nodes to create new sub goals for the food node goal it will never change since is more reward hardcoded, you know you can’t change the food node as its critical for survival.

My main points here is frequency in predictions runs my code, and recognizing similar phrases will increase counts (found using frequency, closest affect it most in delay time), using energy to boost related predictions helps a ton, and permanent reward does too. See how all that and more work in the hierarchies? What more can we do!? Can you add anything!?

I’m really excited if even just one of yous can advance the AGI design I’m at. I’ve seen a lot of ANN variants like variants of GANs, LSTMs, Autoencoders, etc etc, they seem to have things like residual connections, layer norm, convolution windows, many feedforward networks stacked, etc, while my design just sticks to a single collection of hierarchies. Of course you can get the same result by similar ways or by breaking it down into multiple tools with math tricks to get same result. But I’m looking for a more explainable architecture that unifies everything into the same general network, and can worry about the math tricks later. That’s why I say in my work that to predict the next word, we ex. look at the last context (like GPT-2 does) and activate multiple similar phrase nodes in the hierarchy and see what entails them all, they are all little judges/hierarchies. I don’t hear many people saying this, just RNN this, GAN that, no actual straightforward theory.

Transformers have been proven tangibly better than LSTMs in all areas (check out OpenAI and BERT etc), and the Attention Is All You Need papers says, well, it in the tittle. and was written by Google researchers. Transformers are parallel and can process much more faster than RNNs. And you don’t need the recurrentness or LSTM schema, which is confusing.

I’ve read many articles on Transformers, they have a long process and many things used, and after reading them all there is no explanation how it actually works, anywhere, I’m the only one no Earth saying how GPT-2 works. There is some explanation if you look at Word2Vec or the Hutter Prize algorithms like PPM, but no one “knows” how GPT-2 works.

Energy remains in nodes and fades…see:

Improved edit:

Our brain is always dreaming, even when not dreaming or daydreaming. We actually recall stored features (especially energized or loved ones ex. when asleep from the last day, but we can do that in day too it just makes sure you explore, though you rarely exploit in dreams ex. work on AGI only) and recreate/create an experience, in the brain - we can’t feel the real world.

Even more proof:

The proof that shows Temporarily Energized nodes do affect prediction is not just the fact that nodes recently heard must stay in memory active, but also if you had only a small dataset ex. 0KBs and were shown a prompt "the cat and dog cat saw a cat and the " - the next word is not going to be predicted well, much, but out of the 10 words in that prompt, 3 are “cat”, so our probabilities can slap on 0.3 probability to predict “cat” next! This is much more powerful if discover cat=dog by shared context, we can see cat/dog appears 4 times in the prompt, often the past paragraph will talk about grass, leaves, trees etc if is about trees. Because all paragraphs will always contain “the” more than any other words, we ignore common words.

Also, Permanently Active nodes and Semi-Permanent Active nodes have reward on them which makes you talk about question goals you love/desire. So our “GPT-2” would talk about likely ways, to get what it wants. Mental RL. If nodes have Permanant activity which affects predictions, it’s more likely that Temporarily Active nodes also affect prediction.

With my viz in the video (the image of the hierarchy), input goes up and activates nodes, and as well the predicted next word (parent nodes), but only the winner node (usually top candidate probability). The energy chaining the text in my design does not need to flow back down the net (generate output) to do this, because energy just leaks and keeps leaking, as you talk to yourself in your brain you hear the next word predicted and loops back into your net from bottom but maybe not as I just explained why…it would only loop back and activate the same node anyway. Another thing I said was you could duplicate the net and flip it so input goes up, output goes up out, not back down, but again, unneeded duplication and doesn’t make it faster in this case.

Also, humans read text word by word level usually, not parallelly, hence far back nodes are losing energy, you must implement that in a parallel approach. Also as it talks to itself and humans it can only generate 1 word of the future and doesn’t have it all yet. So for training on big data, you could do a parallel approach, but not for new data. Also the brain learns a a bi-directional context around a word feature, when it predicts the next word it only uses the left hand side past but its memory let’s it see into the future before write next words, so in this sense learning a whole sentence fed in in parallel doesn’t make the hierarchies any different, it increments frequencies (strength), adds nodes/connections, etc, same way as non-parallel approach, and the brain is predicting by looking ahead and is also using bi-directional network storage to recognize the feature it is looking at too.

So, learning data in parallel seems to work (storage-wise, all data/ relationships are/ can be captured), and prediction of new words/data is done in the net by leaking activity, bi-directional translation and future look ahead still work for prediction too.

Lastly, if you liked anything above, I have a book that explains a very large portion of my knowledge https://workupload.com/file/skzHXkFX9B9